Read the script below

Tory: Hello and welcome to Safeguarding Soundbites.

Colin: This is your weekly podcast for finding out all the latest online safety news and updates. My name’s Colin.

Tory: And I’m Tory. This week, we’re covering new AI chatbots on social media, conflict news on TikTok and how some of the big names of online platforms are coming together to fight online child sexual exploitation and abuse.

Colin: But first up, let’s check in with what’s been happening in social media. Tory?

Tory: So it looks like Instagram and X, formerly Twitter, are the latest platforms to be joining the AI chatbot craze, joining Snapchat and the bots rumoured to soon be appearing on TikTok and Facebook. These chatbots use artificial intelligence to interact with users in a conversational style, often in the form of private messages, as if you were pm-ing another user on the platform.

Much like Snapchat’s ‘My AI’, it’s rumoured that Instagram’s AI ‘friend’ will answer users’ questions, hold conversations and will also be available for brainstorming sessions and talking through problems.

Colin: And over on X, Musk has already launched his bot, called Grok, to select users. Musk has said his bot “loves sarcasm” and gives humorous answers.

Tory: Yep, and he’s also said that – unlike other AI bots – his bot doesn’t refuse to answer what he’s termed ‘spicy’ questions.

Colin: I’ll be honest, I find that a little concerning! Especially given X’s track record with moderation and safeguarding.

Tory: It is a little worrying! Does that mean it will answer questions the other bots refuse to answer because they’re inappropriate, harmful or asking for advice about illegal activities?

Colin: We just don’t know at this point as it’s only been released to a select number of users. This is something we’re going to be keeping an eye on. In the meantime, I’d recommend our listeners head over to our safeguarding apps or our website ineqe.com and search for our article on chatbots to learn more about what they are and get some top tips on chatbots, AI and mitigating the risks for children and young people.

Tory: Definitely worth checking out.

Moving over to TikTok now who have spoken out after being accused of failing their moderation duties in regard to content relating to the Israel-Hamas war. The platform released a statement saying they have removed more than nine hundred and twenty five thousand videos from the conflict region and millions more from around the world. They also said they’ve experienced something called “spikes in fake engagement” due to millions of fake accounts and bots.

Colin: In other words, these fake accounts and bots have been created to inflate the views of certain TikTok videos and make the content have more engagement than it otherwise would?

Tory: Yes, exactly. Essentially, It creates fake popularity, which trains the algorithm and then that video/post is seen by more people.

Colin: In this case, people are doing this to spread a certain message then?

Tory: Probably. Groups or people often use bots to get a certain political agenda out there, or to bring awareness to something, or even just to make themselves more popular to gain fame. But – TikTok have been criticised by US lawmakers for disproportionately promoting pro-Palestinian content, which the platform denies. They aren’t the only platform that has come under scrutiny for this, though. Meta – has been accused of ‘shadow banning’ – this means making posts invisible from accounts on Instagram who are posting about conditions from inside Gaza…something they have since said was down to a bug in their system. And X is under investigation by the European Union for how it’s handling misinformation about the conflict.

Colin: And this is something that’s very common when we have these big distressing events around the world. There tends to be a lot of mis- and disinformation, fake news and it can be really difficult to tell what’s real and what’s being manipulated etc.

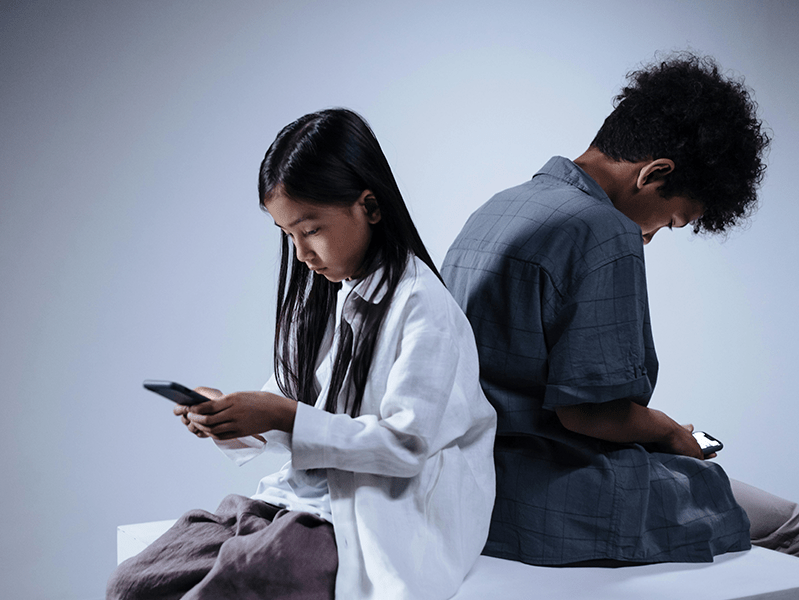

Tory: It can. And we’ve seen research showing that a lot of young people are getting their news from social media including platforms like TikTok. So, not only might it be really confusing for children and young people, but the videos and content can be really upsetting to watch as well.

Colin: So, what can parents, carers, teachers and safeguarding professionals do for children and young people in these types of situations?

Tory: I think firstly, acknowledging their concerns – if a child in your care is coming to you upset or scared about content they’ve seen, don’t dismiss their worries. It’s completely normal for anyone to be upset, at any age!

Be honest too…while you want to protect them, there’s no point lying or pretending you know everything. These situations are complex and divisive and there’s a lot of quick-changing information.

Colin: You can suggest finding out information together, too.

Tory: Absolutely. That leads me onto my next point which is to think about trustworthy news sources together. For example, If they’ve seen a post online, do they know who posted that or where the footage came from?

You can talk about how and where you can find reliable sources. Does someone sharing a video on TikTok double check the sources? Talk about political slants and bias too, why one person or media outlet might report something in one way, and another in a different way.

Colin: And give yourselves grace to not understand or know what’s true or not.

Tory: Exactly Colin.

Colin: It’s a really important point , which goes back to being honest. We’ve seen stories in the media change from ‘definitely true’ to ‘we’re not sure’…explain that you don’t have all the answers and are learning too!

Tory: Yep, isn’t that the truth! And last but not least, talk about news limits, which means restricting your exposure. As we said, this is really distressing and it’s easy to become almost obsessed with keeping up. But we all have different limits on what we can take in and those limits can vary even day-by-day. It’s okay to avoid watching that TikTok video that’s trending today; it’s okay to turn the news off, put down your phone and go for a walk or watch Netflix for a while!

Colin: It certainly is. It’s so I mportant to take of yourself and your emotions and mental health…and we need to make sure the children and young people in our care know that.

Tory: Absolutely. You can also download our shareable “Talking to your Child about War and Conflict” which has all of this advice in one handy place. Find it on our website or our range of safeguarding apps.

Colin: Moving on now to our safeguarding success story of the week!

Colin: This is the news that major platforms are teaming up together to fight online predators with a new information sharing program. Called Lantern, the program works as a central database sharing information to help stop predators avoiding detection by moving victims across platforms.

Tory: Ah, in other words, moving a victim from a platform like Facebook over to Discord?

Colin: Yes. So, how they do that is quite complex – it’s using certain signals like associated keywords and hashes in images which are like digital fingerprints for photos and videos. But at its essence, this is about the platforms sharing information that can help flag potential predators and let companies investigate in order to close accounts or report activity to authorities.

Tory: Even if we don’t necessarily understand the tech, we can understand that it’s positive action being taken! Which companies have signed up?

Colin: So far the platforms signed up to Lantern include Discord, Google, Meta, Roblox, Snapchat and Twitch.

Tory: In terms of children and young people, those are some really key companies in there so that’s great.

Colin: It is! So, listeners, we will leave it there for today and of course we’ll be back again next week with more safeguarding news, updates and advice.

Tory: In the meantime, you can access more updates, news and advice from our online safeguarding experts via our safeguarding apps and our website ineqe.com.

Colin: Until next time –

Both: Stay safe.

Join our Online Safeguarding Hub Newsletter Network

Members of our network receive weekly updates on the trends, risks and threats to children and young people online.

Pause, Think and Plan

Visit the Home Learning Hub!

The Home Learning Hub is our free library of resources to support parents and carers who are taking the time to help their children be safer online.