Read the script below

Danielle: Hello and welcome to Safeguarding Soundbites, the podcast for catching up on all of this week’s most important online safeguarding news.

Tyla: My name’s Tyla.

Danielle: And I’m Danielle. This week, we’re talking about violent content on social media, Meta’s plans for end-to-end encryption, unregulated drugs being sold on TikTok and the shutdown of Omegle.

Tyla: First up, let’s check in with what’s been happening on social media.

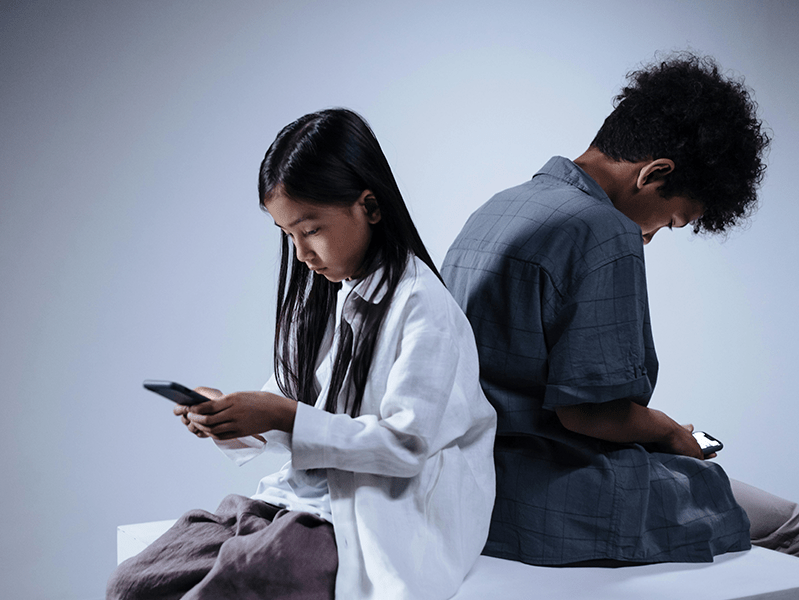

Danielle: Our first topic is a concerning one—young people are viewing graphic content on social media.

Tyla: It absolutely is concerning, Danielle. Research suggests that a third of 13 to 17-year-olds have seen real-life violence on TikTok alone in the past year.

Danielle: These findings from the Youth Endowment Fund also reveal that a quarter of teens saw similar content on Snapchat, 20% on YouTube, and 19% on Instagram.

Tyla: The report also provided insight into the ways in which children are encountering such content. Of those surveyed 27% mentioned that the platform suggested it, half saw it on someone else’s feed and only 9% accessed it deliberately.

Danielle: Wow. Those numbers really emphasise how easy it is for a young person to be accidentally exposed to potentially harmful content. Particularly when we consider the fact that long term exposure to real-life violence can lead to desensitisation and even an increase in aggression for some young people.

Tyla: This is why the Online Safety Bill has already included ‘Having a content moderation function that allows for the swift take down of illegal content’ as part of their Code of Practice. However, the kind of violent content we’re talking about isn’t always illegal. For example, news stories on social media platforms about the Israel-Hamas war have often contained videos that show aggression, violence and other potentially harmful content.

Danielle: Exactly. And while platforms like TikTok have policies against violent content, and users are encouraged to report material that violates those guidelines, the sheer volume makes it challenging to catch everything. They need to step up their efforts to protect younger users.

Tyla: The big question is, how can parents, teachers and those in safeguarding roles help children to navigate this landscape?

Danielle: Well, it needs to be a collective effort. Educating children about the potential harm of viewing graphic content, utilising content controls to filter out graphic material where possible and encouraging conversations about online experiences are crucial first steps. As always, we have a range of resources to help you with this.

Tyla: That’s right, you can find our ‘Reporting Harmful Content’ or ‘Talking to your Child about War and Conflict’ shareables by visiting our website or by downloading one of our Safeguarding Apps.

Tyla: Meta are facing criticism over their plans to implement end-to-end encryption. For those of you who aren’t familiar with this term, end-to-end encryption essentially ensures that only you and the person you’re communicating with can read what is sent, and nobody in between. For platforms like Facebook and Instagram, it means that even the platform itself can’t access the content of your messages.

Danielle: Now, the concern here arises from the fact that this level of privacy could potentially be misused by those with harmful intentions, particularly when it comes to issues like child exploitation and grooming.

Tyla: Right. Critics argue that by employing end-to-end encryption, Meta may inadvertently provide a shield for those with malicious intent to operate more discreetly within their platforms, making it harder for authorities to intervene and protect vulnerable users. Meta may be arguing for the benefits of stronger privacy protections but as ever, the safety of children must be prioritised.

Danielle: It should be about bringing together the tech industry, policymakers, and safeguarding experts to create a comprehensive approach that protects children without sacrificing online privacy…

Tyla: …Which once again, we hope to see more of as the Online Safety Bill begins to roll out.

Danielle: Omegle, known for its controversial anonymous video chatting function, has officially shut down. Many are breathing a sigh of relief, considering the various risks that were associated with the platform.

Tyla: They certainly are, Danielle. Omegle had become a breeding ground for inappropriate contact and highly sexualised content. Due to its limited and ineffective age verification measures, unfortunately this meant many younger users were able to access and use the platform.

Danielle: And this is the exact reason for its closure. One female user reported that Omegle’s defective and negligent design enabled her to be sexually abused through the site and she subsequently sued the platform. The abuse spanned a 3 year period where she was randomly paired with a man in his thirties who forced her to take naked photos and videos.

Tyla: What is particularly shocking but unfortunately not unusual for Omegle is that she was just 11 when it began.

Danielle: Yes and even the BBC have reported that the site has been mentioned in more than 50 cases against paedophiles in the last couple of years alone.

The question now is, what comes next? The closure of Omegle creates a dangerous business opportunity for those who might exploit the void it leaves behind.

Tyla: And while it may indeed be a relief to see Omegle go, its closure is likely to lead to increased use of alternative, lesser-known platforms. We’ve already seen this happen with Emerald, a platform that markets itself as “the new Omegle” which offers similar anonymous chat functions and random video matches.

Danielle: To stay informed about the risks of apps that encourage contact between strangers, check out our website or range of Safeguarding Apps.

Tyla: According to a report from the Center for Countering Digital Hate, millions of young people are being exposed to dangerous steroid-like drugs (or SLDs) through TikTok influencers. These influencers are partnering with online vendors to promote unregulated and often illicit substances. The report reveals that influencers frequently target a young audience using hashtags like #TeenBodybuilding.

Danielle: It’s alarming to see how some of these influencers downplay the health risks in their content. They often use before-and-after pictures of dramatic body transformations to glamorise the products they are selling and trivialise any associated dangers.

Tyla: One influencer who was quoted in the report advised viewers to “just tell your parents they’re vitamins.”

Danielle: Certainly shocking, but what exactly are steroid-like drugs?

Tyla: SLDs are a group of synthetic drugs marketed for muscle gain and fat loss. Depending on the product, their sale is restricted or illegal in the UK and each of the three major types pose serious health threats, including increased risk of heart attack, liver failure and psychosis.

Danielle: Well, if that isn’t risky enough, unfortunately many of these products have been found to be contaminated, mislabelled, or combined with other undisclosed substances.

Tyla: The report also highlights the role of TikTok influencers in boosting the reach of these unregulated products. They significantly increase the audience for sellers, earning lucrative deals and commission on sales through their links.

Danielle: The promotion of SLDs or any drug use by young people is a violation of TikTok’s community guidelines and the platform claims to remove such content when detected. But there is clearly room for improvement in their enforcement of this policy. The CEO of the Center for Countering Digital Hate, called on TikTok to enforce stronger policies against drug promotion and urged policymakers to close loopholes allowing the online sale of these drugs.

Tyla: Moving on now to our safeguarding success story of the week!

Danielle: This week, we’ve got an inspiring story coming from Northampton where a gaming café is taking a unique approach to combatting youth gang culture through an initiative called ‘Games Against Gangs.’

Tyla: Intriguing! Tell us more!

Danielle: Well, this initiative has received over £95,000 in funding from Children in Need. It’s providing a safe space for young people who might otherwise get caught up in antisocial behaviour by using gaming as a tool to teach teamwork and to start discussions about online safety.

Tyla: What a fantastic idea! It sounds like they’re doing more than just playing games then.

Danielle: Exactly. ‘The Yard’ uses games as opportunities to help young people. It’s not just a gaming cafe; it’s a catalyst for positive change in the community.

Tyla: So, listeners, we’ll leave it on that positive note for today and of course we’ll be back again next week with more safeguarding news, updates and advice.

Tyla: Until next time –

Both: Stay safe.

Join our Online Safeguarding Hub Newsletter Network

Members of our network receive weekly updates on the trends, risks and threats to children and young people online.

Pause, Think and Plan

Visit the Home Learning Hub!

The Home Learning Hub is our free library of resources to support parents and carers who are taking the time to help their children be safer online.